Most "AI agent architecture tutorial" content skips the part that actually matters: what happens when you give Claude Code a complex task and walk away.

The answer, for a lot of developers, is chaos. Silent failures. The AI confidently rewrites something it wasn't supposed to touch. Hours of agentic runtime that produce code you can't trust.

But a small group of engineers in the Claude Code community have been quietly figuring out how to make autonomous AI coding actually work. What they're building isn't vibe coding — it's structured, auditable agentic architecture. Here's what I've learned from watching them.

The Problem With Pure Vibe Coding

One developer on r/ClaudeCode did something worth paying attention to: they logged 100 hours with Claude Code and 20 hours with Codex, then wrote up what they actually learned.

The finding wasn't about which model is smarter. It was about architecture. Pure "prompt and pray" doesn't scale. What does work:

- Commit by phase, not by task. Break work into discrete phases. Commit after each one. If the AI goes sideways in phase 3, you have a clean rollback point.

- Run a code-review sub-agent on every commit. Don't review manually. Spin up a specialized agent whose only job is auditing the last commit against the original spec.

- Write a 100-line CLAUDE.md. Not a short description — a dense behavioral specification. What the AI should never touch. Which patterns to follow. What "done" means for this codebase.

This is the core insight: the discipline that makes human engineering teams reliable also makes AI agent teams reliable. The tool changed; the principles didn't.

Wrapping Claude Code as a Controlled Agent

Once you accept that agentic Claude needs guardrails, the next question is: how do you actually enforce them programmatically?

One answer comes from a project called Claudraband, built by a developer on Hacker News. The idea: wrap the Claude Code TUI itself inside a controlled terminal — either tmux or xterm.js — so you can mediate every interaction programmatically rather than typing prompts manually.

This architecture gives you:

- Automated multi-step workflows without manually babysitting the prompt

- Programmatic intervention — you can inspect Claude's output before it proceeds to the next step

- A clean separation between the Claude Code layer and your orchestration logic

It's essentially building a harness around an agent that already exists, rather than writing a new agent from scratch. If your goal is to automate long, structured tasks — the kind that take 30-60 minutes of careful work — this pattern is significantly more reliable than a single long-context prompt.

The $0.02 Sub-Agent: Routing Secondary Tasks to Cheaper Models

Here's a more aggressive cost-optimization trick that's directly relevant to any AI agent architecture tutorial: you don't have to use Claude for everything Claude Code does.

A developer on r/ClaudeAI built a wrapper that speaks the OpenAI Chat Completions protocol, then added it to their CLAUDE.md. The result: Claude Code can route secondary tasks — boilerplate generation, simple lookups, summarization — to cheaper models like DeepSeek, OpenRouter-hosted models, or local Ollama instances at around $0.02 per call.

The main agent (Claude) handles the high-stakes reasoning. The sub-agents handle the grunt work.

This is a real AI agent architecture pattern — often called a "hierarchical agent" or "router" pattern — and it has two practical benefits:

- You stay within Claude Pro usage limits for complex tasks

- Your total inference cost drops dramatically on mixed-complexity workflows

For anyone building production agentic systems, this matters more than benchmark scores.

What This Looks Like as a Stack

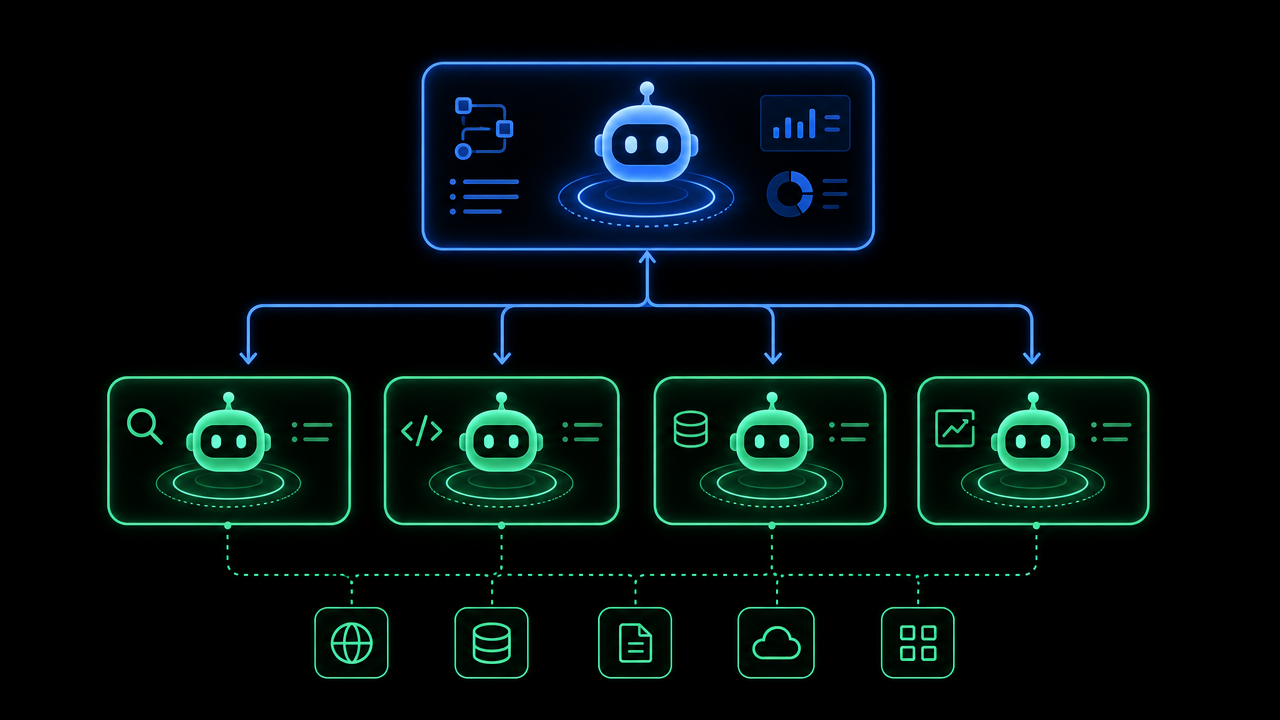

Pull these patterns together and you get a rough architecture that's emerging from real practitioners:

[Orchestrator Layer]

↓ Phase-based task breakdown

[Claude Code — Main Agent]

↓ Routes secondary tasks

[Cheap Sub-Agent — DeepSeek / Ollama]

↓ Each commit triggers

[Code-Review Sub-Agent]

↓ Mediated by

[Claudraband / tmux harness]

The CLAUDE.md file acts as the behavioral spec that ties all of this together — it's the document that tells the main agent what kind of orchestrator it's working inside.

Why This Matters for Solo Builders

If you're building AI SaaS as a solo founder, the leverage here is significant. The gap between "I can use Claude Code" and "I have a reliable agentic pipeline" is the difference between occasional productivity gains and a genuinely scalable development practice.

The builders doing this well aren't the ones with the most credits — they're the ones who treated AI agent architecture like a real engineering discipline. Same guardrails. Same commit hygiene. Same code review. Just with an AI doing most of the execution.

The benchmark wars (Claude vs. DeepSeek vs. GPT) are mostly noise for this use case. What matters is the architecture you wrap around whichever model you choose.

If you're building something with Claude Code and have discovered patterns that work, I'd genuinely like to hear about them.